The race to develop quicker and extra highly effective chips for AI and machine studying intensified this week as an organization higher identified for its social media expertise and controversial chief─Meta─has fired the most recent salvo.

A weblog publish on Meta’s web site Wednesday revealed the corporate has unveiled the second era of its Coaching and Inference Accelerator, a chip meant to energy the corporate’s AI infrastructure. Meta launched the primary model of this chip final yr, and is touting efficiency enhancements within the second-generation half.

Not like chipmakers Intel and Nvidia, which in latest months have headlocked in a battle to provide quicker and extra highly effective processors for AI and high-end computing, Meta will not be aiming on the mass market AI prospects with its half. However the firm has chosen the customized silicon path to fulfill its personal AI processing wants.

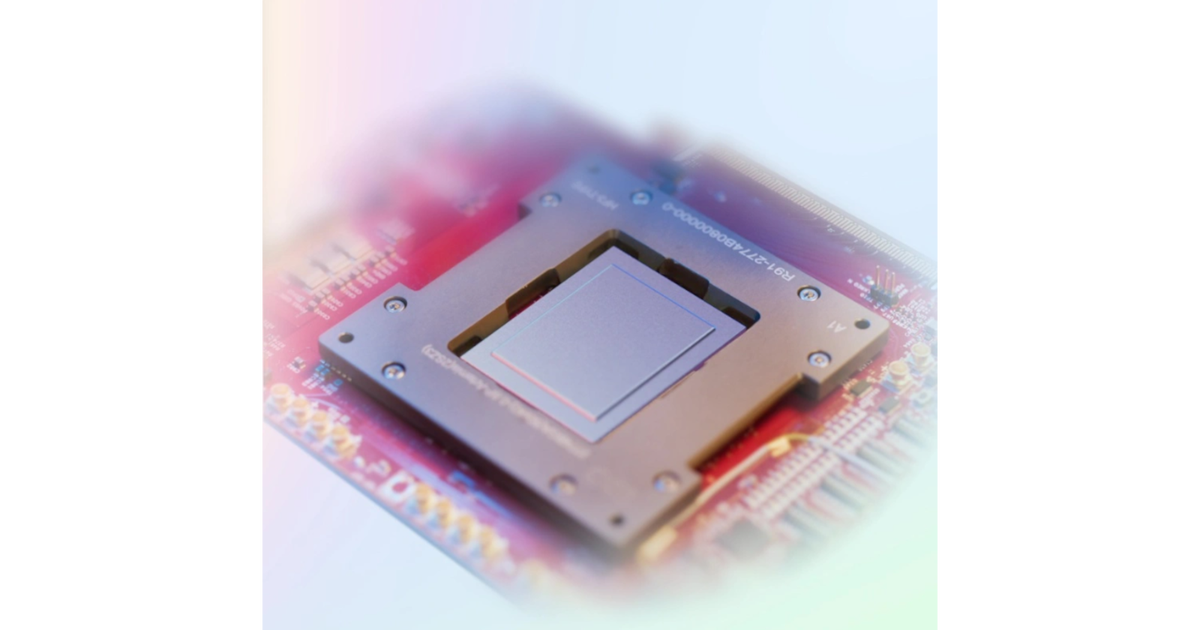

Inside Meta’s Accelerator

Based on Meta, the accelerator includes an 8×8 grid of processing components (PEs). These components present considerably elevated dense compute efficiency (3.5x over the predecessor MTIA v1) and sparse compute efficiency (7x enchancment). Meta says these enhancements stem from enhancing the structure related to pipelining of sparse compute.

Meta additionally tripled the scale of the native PE storage, doubled the on-chip SRAM from 64 to 128 MB, elevated its bandwidth by 3.5X, and doubled the capability of LPDDR5. The brand new chip runs at a clock price of 1.35 GHz, up from 800 MHz beforehand. Meta constructed its new chip, which is bodily bigger than its predecessor, with a 5-nm relatively than 7-nm course of.

To help the next-generation silicon, Meta developed a big, rack-based system that holds as much as 72 accelerators. This technique includes three chassis, every containing 12 boards that home two accelerators every. The configuration ensures the flexibility to accommodate larger compute, reminiscence bandwidth, and reminiscence capability.

On the software program finish, Meta mentioned in its weblog it additional optimized its software program stack to create the Triton-MTIA compiler backend to generate the high-performance code for the MTIA {hardware}. The Triton-MTIA backend performs optimizations to maximise {hardware} utilization and help high-performance kernels.

Google’s In-Home Effort

Like Meta, Google goes in-house with customized silicon for its AI improvement. Throughout the firm’s Cloud Subsequent computing occasion Tuesday, Google reportedly launched particulars of some model of its AI chip for knowledge facilities, in addition to saying an Arm-based central processor. The tensor processing unit (TPU), which Google will not be promoting instantly however is out there to builders via Google Cloud, reported can obtain twice the efficiency of Google’s earlier TPUs.

Google’s new Arm-based central processing unit (CPU), known as Axion, reportedly affords higher efficiency than x86 chips. Google may also supply Axion via by way of Google Cloud.

Don’t Neglect Intel

To not be overlooked, Intel earlier this week revealed its Gaudi 3 AI accelerator through the firm’s Intel Imaginative and prescient occasion. Gaudi 3 is designed to ship 4 occasions quicker AI computing, present a 1.5x enhance in reminiscence bandwidth, and double the networking bandwidth for large system scale-out in comparison with its predecessor.

Intel expects the chip to considerably enhance efficiency and productiveness for AI coaching and inference on common giant language fashions (LLMs) and multimodal fashions.

The Intel Gaudi 3 accelerator is manufactured on a 5 nanometer (nm) course of and is designed to permit activation of all engines in parallel — with the Matrix Multiplication Engine (MME), Tensor Processor Cores (TPCs), and Networking Interface Playing cards (NICs) — enabling the acceleration wanted for quick, environment friendly deep studying computation and scale.

Intel’s Gaudi 3 accelerator. (Intel)

Key options of Gaudi 3 embrace:

-

AI-Devoted Compute Engine: Every Intel Gaudi 3 MME can carry out a powerful 64,000 parallel operations, permitting a excessive diploma of computational effectivity, enabling them to deal with complicated matrix operations, a sort of computation basic to deep studying algorithms.

-

Reminiscence Enhance for LLM Capability Necessities: 128 gigabytes (GB) of HBMe2 reminiscence capability, 3.7 terabytes (TB) of reminiscence bandwidth, and 96 megabytes (MB) of on-board static random entry reminiscence (SRAM) present ample reminiscence for processing giant GenAI datasets on fewer Intel Gaudi 3s, significantly helpful in serving giant language and multimodal fashions.

-

Environment friendly System Scaling for Enterprise GenAI: Twenty-four 200 gigabit (Gb) Ethernet ports are built-in into each Intel Gaudi 3 accelerator, offering versatile and open-standard networking. They permit environment friendly scaling to help giant compute clusters and get rid of vendor lock-in from proprietary networking materials.