From surveillance and entry management to good factories and predictive upkeep, the deployment of synthetic intelligence (AI) constructed round machine studying (ML) fashions is turning into ubiquitous in industrial IoT edge-processing purposes. With this ubiquity, the constructing of AI-enabled options has change into ‘democratized’ – transferring from being a specialist self-discipline of information scientists to 1 embedded system designers are anticipated to grasp.

The problem of such democratization is that designers should not essentially well-equipped to outline the issue to be solved and to seize and arrange information most appropriately. In contrast to shopper options, there are few datasets for industrial AI implementations, which means they typically need to be created from scratch, from the consumer’s information.

Into the Mainstream

AI has gone mainstream, and deep studying and machine studying (DL and ML, respectively) are behind many purposes we now take without any consideration, equivalent to pure language processing, laptop imaginative and prescient, predictive upkeep, and information mining. Early AI implementations have been cloud- or server-based, requiring immense processing energy, storage, and excessive bandwidth between AI/ML purposes and the sting (endpoint). And whereas such set-ups are nonetheless wanted for generative AI purposes equivalent to ChatGPT, DALL-E and Bard, current years have seen the arrival of edge-processed AI, the place information is processed in real-time on the level of seize.

Edge processing vastly reduces reliance on the cloud, makes the general system/utility quicker, requires much less energy, and prices much less. Many additionally think about safety to be improved, however it could be extra correct to say the principle safety focus shifts from defending in-flight communications between the cloud and the endpoint to creating the sting gadget safer.

AI/ML on the edge may be applied on a conventional embedded system, for which designers have entry to highly effective microprocessors, graphical processing models, and an abundance of reminiscence units; assets akin to a PC. Nonetheless, there’s a rising demand for IoT units (industrial and industrial) to characteristic AI/ML on the edge, and so they usually have restricted {hardware} assets and, in lots of instances, are battery-powered.

The potential for AI/ML on the edge operating on resource- and power-restricted {hardware} has given rise to the time period TinyML. Instance makes use of exist in business (for predictive upkeep, for example), constructing automation (environmental monitoring), building (overseeing the protection of personnel), and safety.

The Information Movement

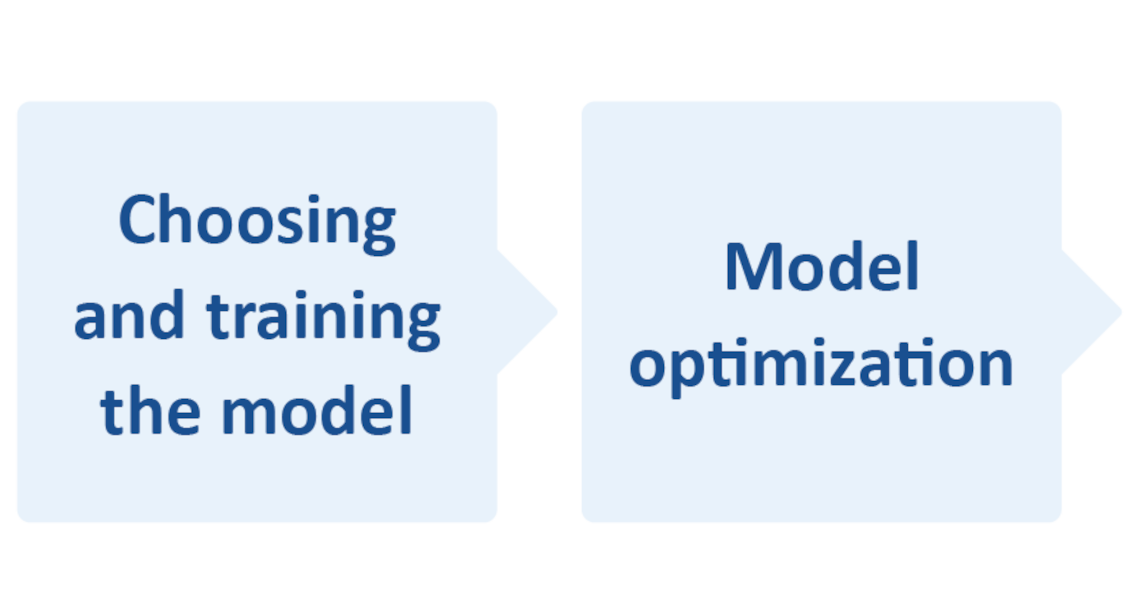

AI (and by extension its subset ML), requires a workflow from information seize/assortment to mannequin deployment (Determine 1). The place TinyML is anxious, optimization all through the workflow is important due to restricted assets inside the embedded system.

As an example, TinyML useful resource necessities are a processing pace of 1 to 400 MHz, 2 to 512 KB of RAM, and 32 KB to 2 MB of storage (Flash). Additionally, at 150 µW to 23.5 mW, working inside such a small energy finances typically proves difficult.

Determine 1. A simplified AI workflow circulate. The mannequin deployment should itself feed information again into the circulate, probably even influencing the gathering of information. (Microchip)

There’s a greater consideration, or somewhat trade-off in the case of embedding AI right into a resource-restricted embedded system. Fashions are essential to system habits, however designers typically make compromises between mannequin high quality/accuracy, which influences system reliability/dependability and efficiency; mainly working pace and energy draw.

One other key issue is deciding which sort of AI/ML to make use of. Typically, three forms of algorithms can be utilized: supervised, unsupervised, and strengthened.

Creating Viable Options

Even designers who perceive AI and ML effectively might wrestle to optimize every stage of the AI/ML workflow and strike the right steadiness between mannequin accuracy and system efficiency. So, how can embedded designers with no earlier expertise handle the challenges?

Firstly, don’t lose sight of the truth that fashions deployed on resource-restricted IoT units will probably be environment friendly if the mannequin is small and the AI process is restricted to fixing a easy drawback.

Happily, the arrival of ML (and notably TinyML) into the embedded programs area has resulted in new (or enhanced) built-in growth environments (IDEs), software program instruments, architectures, and fashions – a lot of that are open supply. For instance, TensorFlow™ Lite for microcontrollers (TF Lite Micro) is a free and open-source software program library for ML and AI. It was designed to implement ML on units with only a few KB of reminiscence. Additionally, packages may be written in Python, which can also be open-source and free.

As for IDEs, Microchip’s MPLAB® X is an instance of 1 such surroundings. This IDE can be utilized with the corporate’s MPLAB ML, an MPLAB X plug-in specifically developed to construct optimized AI IoT sensor recognition code. Powered by AutoML, MPLAB ML totally automates every step of the AI ML workflow, eliminating the necessity for repetitive, tedious, and time-consuming mannequin constructing. Function extraction, coaching, validation, and testing ensures optimized fashions that fulfill the reminiscence constraints of microcontrollers and microprocessors, permitting builders to rapidly create and deploy ML options on Microchip Arm® Cortex-based 32-bit MCUs or MPUs.

Optimizing Workflow

Workflow optimization duties may be simplified by beginning with off-the-shelf datasets and fashions. As an example, if an ML-enabled IoT gadget wants picture recognition, beginning with an current dataset of labeled static photographs and video clips for mannequin coaching (testing and evaluating) is sensible; noting that labeled information is required for supervised ML algorithms.

Many picture datasets exist already for laptop imaginative and prescient purposes. Nonetheless, as they’re meant for PC-, server- or cloud-based purposes, they are usually massive. ImageNet, for example, accommodates greater than 14 million annotated photographs.

Relying on the ML utility, just a few subsets is likely to be required; say many photographs of individuals however just a few of inanimate objects. As an example, if ML-enabled cameras are for use on a building web site, they may instantly elevate an alarm if an individual not sporting a tough hat comes into its discipline of view. The ML mannequin will must be skilled however, probably, utilizing just a few photographs of individuals sporting or not sporting onerous hats. Nonetheless, there would possibly must be a bigger dataset for hat varieties and a enough vary inside the dataset to permit for varied elements equivalent to completely different lighting situations.

Having the right stay (information) inputs and dataset, getting ready the info and coaching the mannequin account for steps 1 to three in Determine 1. Mannequin optimization (step 4) is usually a case of compression, which helps scale back reminiscence necessities (RAM throughout processing and NVM for storage) and processing latency.

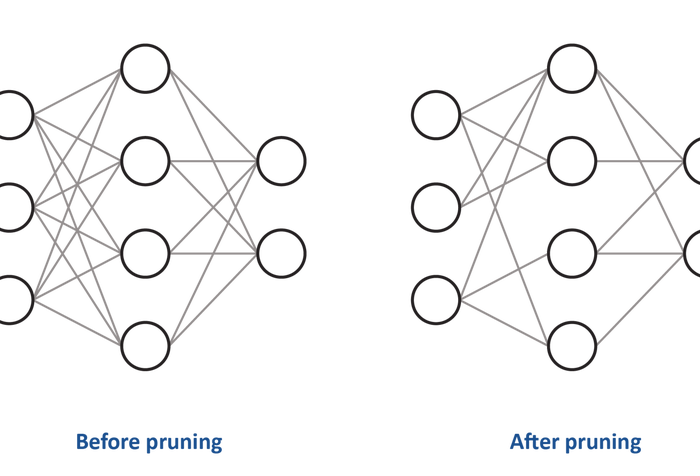

Relating to the processing, many AI algorithms equivalent to convolutional neural networks (CNNs), wrestle with complicated fashions. A preferred compression approach is pruning (see Determine 2), of which there are 4 varieties: weight pruning, unit/neuron pruning, and iterative pruning.

Determine 2. Pruning reduces the density of the neural community. Above, the load of a few of the connections between the neurons has been set to zero. (Microchip)

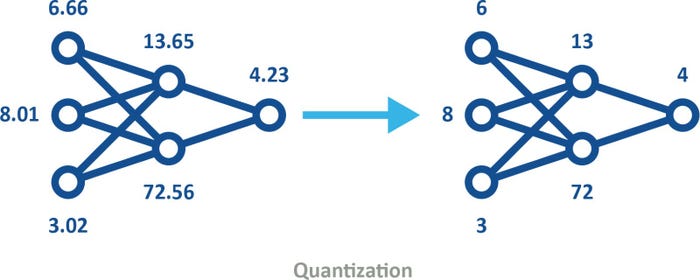

Quantization is one other well-liked compression approach. This course of converts information in a high-precision format, equivalent to floating-point 32-bit (FP32), to a lower-precision format, say an 8-bit integer (INT8). The usage of quantized fashions (see Determine 3) may be factored into machine coaching in one in all two methods.

•Put up-training quantization includes utilizing fashions in, say FP32 format and when the coaching is taken into account full, quantizing for deployment. For instance, commonplace TensorFlow can be utilized for preliminary mannequin coaching and optimization on a PC. The mannequin can then be quantized and, by means of TensorFlow Lite, embedded into the IoT gadget.

•Quantization-aware coaching emulates inference-time quantization, making a mannequin downstream instruments will probably be used to provide quantized fashions.

Determine 3. Quantized fashions use decrease precision, thus decreasing reminiscence and storage necessities and bettering power effectivity, whereas nonetheless preserving the identical form. (Microchip)

Whereas quantization is helpful, it shouldn’t be used excessively, as it may be analogous to compressing a digital picture by representing colours with fewer bits, or through the use of fewer pixels.

Abstract

AI is now effectively and really within the embedded programs area. Nonetheless, this democratization means design engineers who haven’t beforehand wanted to grasp AI and ML are confronted with the challenges of implementing AI-based options into their designs.

Whereas the problem of making ML purposes may be daunting, it’s not new, no less than not for seasoned embedded system designers. The excellent news is {that a} wealth of knowledge (and coaching) is on the market inside the engineering group, IDEs equivalent to MPLAB X, mannequin builders equivalent to MPLAB ML, and open-source datasets and fashions. This ecosystem helps engineers with various ranges of understanding pace AL and ML options that may now be applied on 16-bit and even 8-bit microcontrollers.

Yann LeFaou is Affiliate Director for Microchip’s contact and gesture enterprise unit. On this position, LeFaou leads a staff creating capacitive contact applied sciences and in addition drives the corporate’s Machine studying (ML) initiative for microcontrollers and microprocessors. He has held a sequence of successive technical and advertising and marketing roles at Microchip, together with main the corporate’s international advertising and marketing actions of capacitive contact, human machine interface and residential equipment expertise. LeFaou holds a level from ESME Sudria in France.